How to Play Go

In March 2016, a program called AlphaGo, developed by Google’s DeepMind artificial intelligence team, beat one of the best go players alive, Lee Sedol. So we figured why not learn a bit more about the program and the go board game as well?

By SoraNews24

http://en.rocketnews24.com/2016/03/15/how-to-play-go-the-game-that-humans-keep-losing-to-googles-highly-intelligent-computer-brain/

DeepMind, the company that developed AlphaGo, was founded in 2010 by chess prodigy and AI researcher Demis Hassabis (left). So far, AlphaGo has studied a database of go matches that gave it the equivalent experience of playing the game for 80 straight years. Google acquired DeepMind in 2014.

https://en.wikipedia.org/wiki/File:FloorGoban.JPG

What makes go such a great target of DeepMind and Google’s AI team is the nature of the game itself.

https://www.flickr.com/photos/adavey/albums/72157604419475847/page2

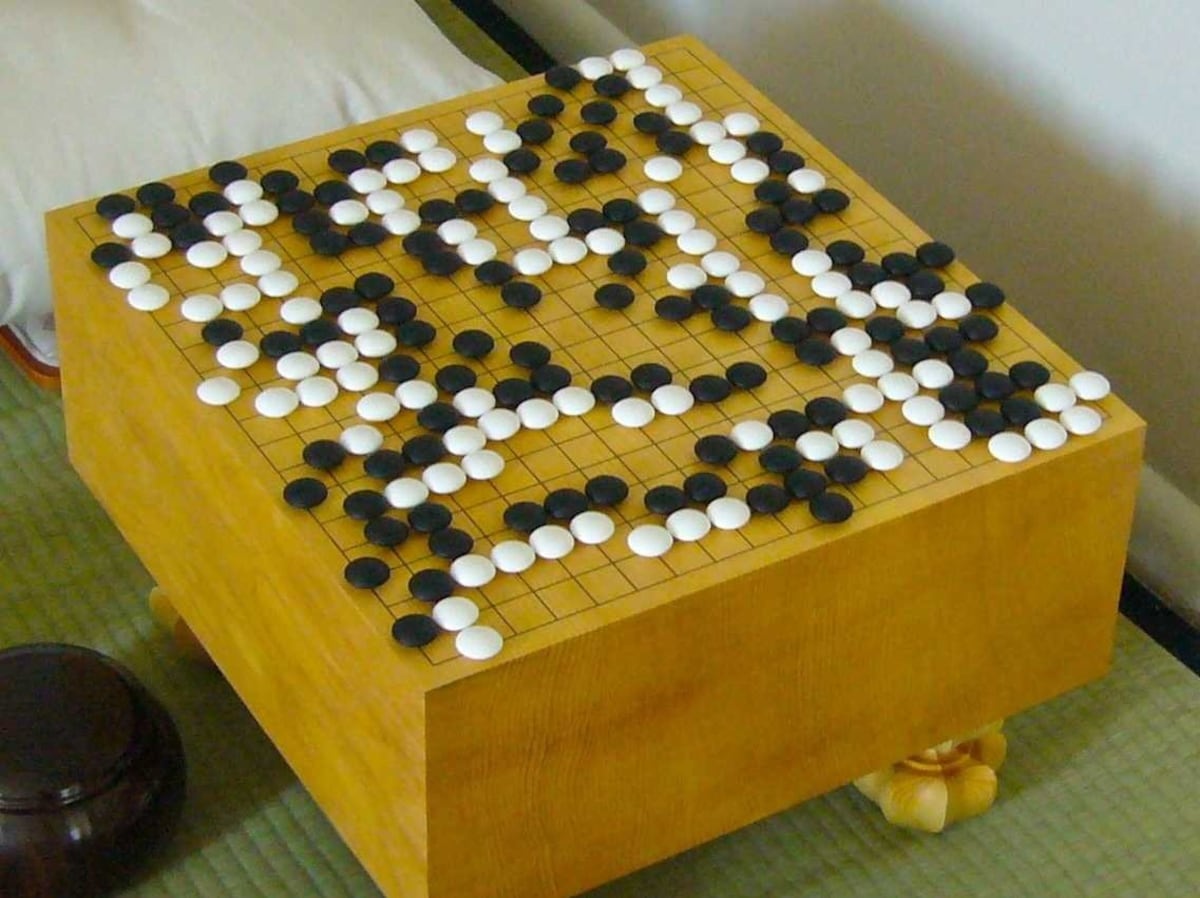

Created more than 2,500 years ago in China, go has simple rules, but requires a mixture of complicated strategy and foresight.

http://www.cosumi.net/play.html

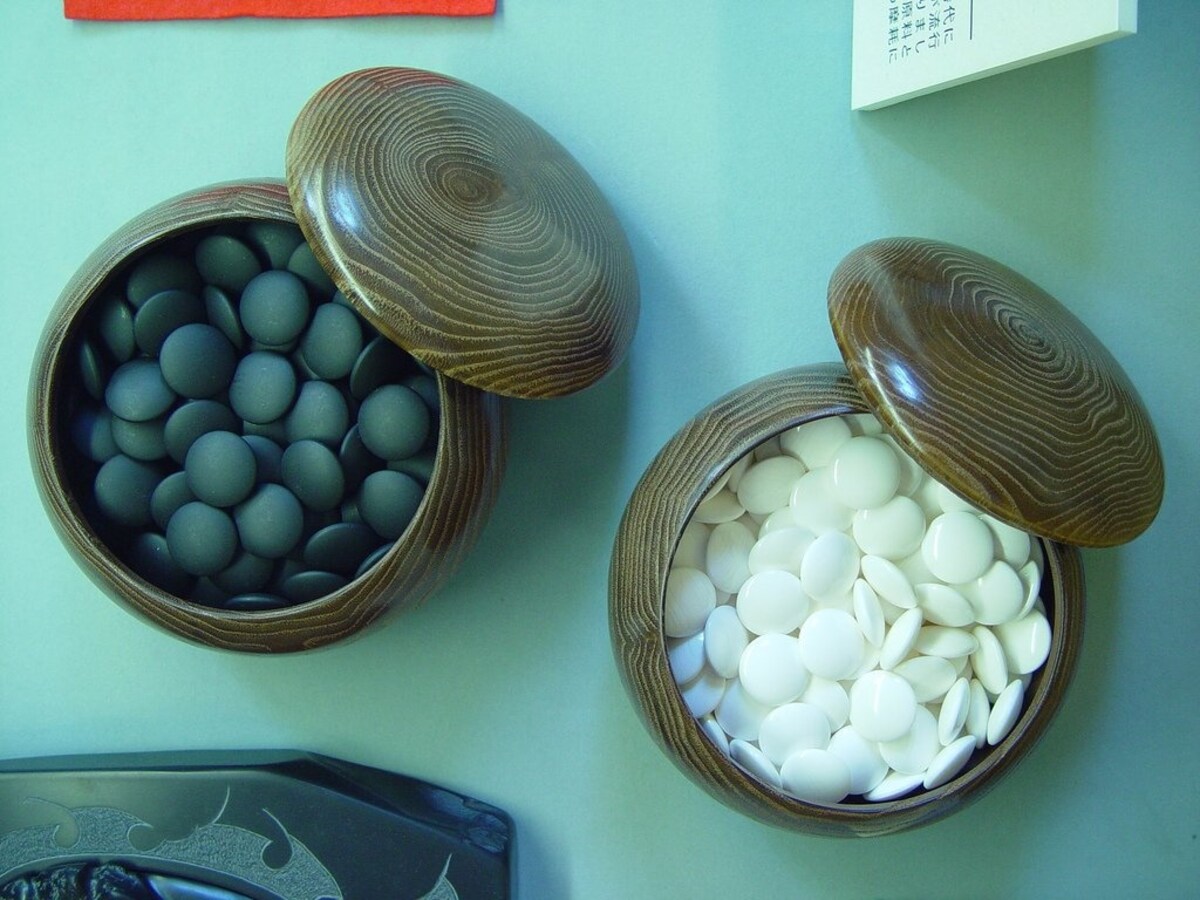

The game begins with an empty board. There’s only one type of stone, unlike chess, which has six different pieces. The two players alternate turns, placing one of their stones on any vacant intersection of the lines at each turn.

https://www.youtube.com/watch?v=g-dKXOlsf98

The stones are not moved once they’re played. However, they may be “captured,” in which case they are removed from the board. You can capture stones by completely surrounding them, like this (white is one stone away from capturing the black stone in this example).

https://www.flickr.com/photos/jciti/albums/72157626582134579

But the goal isn’t to capture as many stones as possible. The main object of the game is to use your stones to form territories and occupy the most space possible. The more you play, you’ll realize there’s a countless number of patterns and moves that really make the game intriguing.

https://www.flickr.com/photos/evoo73/albums/72157616728369475

In fact, the sheer number of possible moves is what makes go such a complicated game to learn. After the first two moves of a chess game, there are 400 possible next moves—in go, there are close to 130,000.